Чи виявляє ШІ діпфейк-відеодзвінки наживо?

Ваш CFO приєднується до Zoom-дзвінка і просить фінансову команду перевести 25 мільйонів доларів. Обличчя виглядає правильно. Голос збігається. Через сорок хвилин справжній CFO дізнається, що нічого не планувалося. Шахрайство Arup на початку 2024 року розвивалося саме так, бо детекція їх не врятувала. Жоден плагін Zoom не позначив діпфейк. Жоден аудіоаналізатор не впіймав клон.

З цього випливає очевидне питання: чи здатний ШІ виявляти діпфейк-відеодзвінки у реальному часі? І якщо інструменти існують, чому Arup втратив 25 мільйонів доларів?

Коротка відповідь

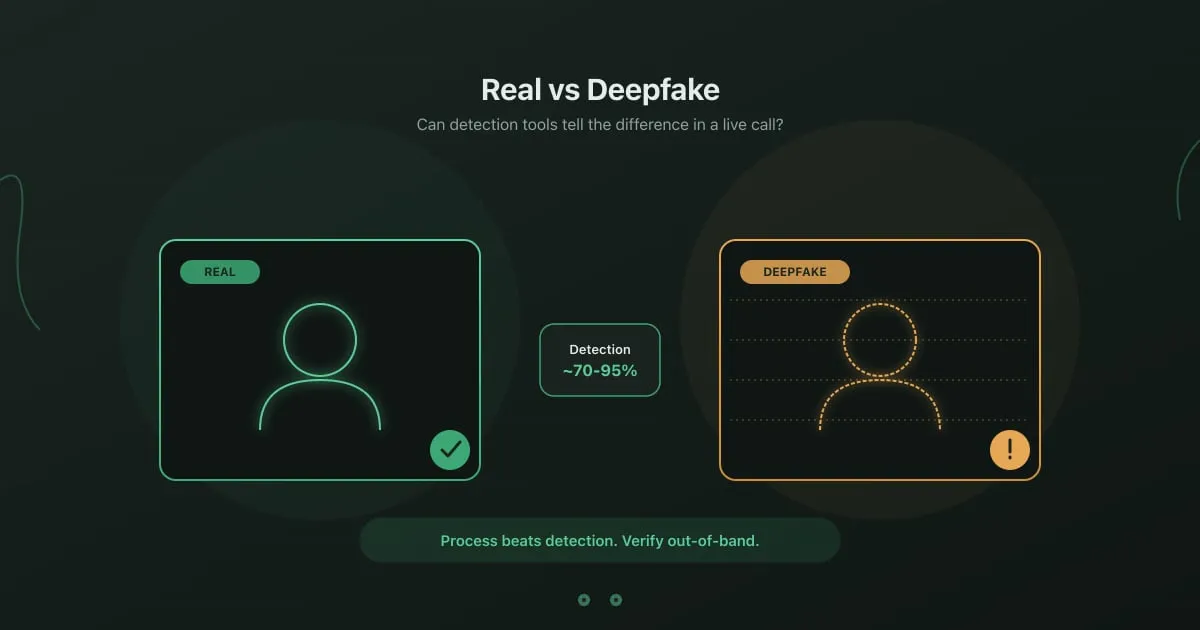

Section titled “Коротка відповідь”Частково. Корпоративні платформи детекції діпфейків існують і працюють у лабораторних умовах. У живих продакшн-дзвінках точність детекції деградує в міру покращення якості генерації. Великі споживчі відеоплатформи (Zoom, Microsoft Teams, Google Meet) у 2026 році не постачають надійної вбудованої детекції.

Для будь-якого важливого запиту, що надходить у живому дзвінку, процесна верифікація (зворотний дзвінок на відомий номер, заздалегідь узгоджені кодові слова, позаконтекстні внутрішні запитання) залишається надійнішою за будь-який інструмент детекції в реальному часі на ринку.

Що таке детекція діпфейків у реальному часі?

Section titled “Що таке детекція діпфейків у реальному часі?”Детекція діпфейків у реальному часі — це автоматизований аналіз потоків відео або аудіо під час живого дзвінка для виявлення синтетичних медіа до того, як людина ухвалить рішення за запитом. Системи детекції шукають сигнали у трьох шарах: артефакти рівня пікселів від GAN-генерації, фізіологічні невідповідності на кшталт нерегулярних патернів моргання, відсутності сигналу пульсу в кольорі шкіри та неузгодженості липсинку, а також темпоральні аномалії, включно з невідповідностями кадрової частоти та артефактами компресії. Детекція голосу додає спектральний аналіз відбитків клонів і просодичні нерегулярності в ритмі та інтонації. За опитуванням Regula 2024 року, 49 відсотків бізнесів у світі стикалися з діпфейк-шахрайством, і постачальники детекції відповіли на це випуском real-time плагінів для корпоративних відеоплатформ. Точність сильно варіюється: твердження постачальників про 95-відсоткову детекцію стосуються лабораторних датасетів із відомими виходами генераторів. Проти нового генератора, який детектор не бачив, точність може впасти нижче 60 відсотків, тому продакшн-розгортання рідко сягають рівня бенчмарків.

Які постачальники пропонують real-time детекцію у 2026 році?

Section titled “Які постачальники пропонують real-time детекцію у 2026 році?”Reality Defender. Браузерна та API-детекція для відео й аудіо. Заявляє близько 95 відсотків точності на відомих генераторах, нижче на нових моделях. Використовується великими банками для перевірки шахрайства у кол-центрах.

Pindrop. Детекція з фокусом на голос і аналізом на рівні фонем. Сильна на кейсах кол-центрів. Інтегрований із Zoom Contact Center.

Intel FakeCatcher. Використовує фотоплетизмографію (визначає кровообіг через зміни в кольорі шкіри), щоб ідентифікувати реальних людей на відео. Заявляє детекцію в реальному часі, але вимагає конкретних умов освітлення.

Microsoft Video Authenticator. Частина інструментарію відповідального ШІ від Microsoft. Станом на 2026 рік не упакований як real-time плагін для Teams.

DuckDuckGoose, Sensity, Truepic. Менші гравці з конкретними вертикалями, такими як верифікація особи та медіафорензика.

Усі вони цілять у корпоративних покупців, а не у споживчі платформи відеодзвінків. Ціноутворення стартує приблизно від 50 000 доларів на рік для розгортань середнього ринку й масштабується з використанням.

Чому real-time детекція складна?

Section titled “Чому real-time детекція складна?”Фундаментальна проблема — швидкість. Детектор, якому потрібно дві секунди, щоб проаналізувати кадр відео, не встигає за живим дзвінком у 30 fps.

Чотири технічні обмеження роблять real-time детекцію складною на практиці.

Обчислювальна вартість. Запуск deep learning-класифікатора на кожному кадрі відео потребує GPU-ресурсів, які більшість інфраструктури відеодзвінків не виділяє на учасника. Легші класифікатори обмінюють точність на швидкість.

Адаптація до нових генераторів. Детектори, треновані на виходах GAN 2023 року, часто пропускають відео дифузійних моделей 2025 року. Цикли перенавчання відстають від інновацій атакувальників на тижні чи місяці.

Компресія та мережеві артефакти. Легітимна компресія відео, втрата пакетів і варіації кодеків створюють артефакти, схожі на артефакти діпфейків. Відсоток хибних спрацьовувань зростає швидко.

Варіативність освітлення та кута. Методи фізіологічної детекції (частота моргання, виявлення пульсу) залежать від стабільного освітлення й фронтальних кутів камери. Реальні відеодзвінки часто не мають ні першого, ні другого.

Кейс Arup ілюструє прогалину продакшну. Кілька людей на тому дзвінку були синтетичними. Якби надійна real-time детекція існувала на споживчому рівні, фінансові команди мали б Zoom-плагін, що позначив би шахрайство. Такого плагіна немає. Після інциденту Arup упровадив внутрішні контролі верифікації, а не технічне виправлення через детекцію.

Що real-time детекція ловить на практиці?

Section titled “Що real-time детекція ловить на практиці?”Реальна продуктивність сильно залежить від того, хто згенерував діпфейк.

Ширвжиткові інструменти на кшталт DeepFaceLive або споживчих face-swap-застосунків детектуються достатньо добре. Вони продукують ідентифіковані артефакти й працюють на відомих генераторах.

Кастомні моделі, натреновані на цілі, стає складніше виявити. Атакувальник, що тренує окрему модель на публічних виступах керівника у YouTube, може продукувати вихід, який класифікатори пропускають.

Real-time face-swap на сучасних GPU є ненадійним для детекції. Обмеження затримки змушують використовувати швидкі класифікатори з нижчою точністю.

Клонування голосу через VALL-E, ElevenLabs та споживчі text-to-speech сервіси піддається детекції краще, ніж відео. Аналіз фонем і спектральне відбиткування працюють проти аудіо краще, ніж піксельні методи проти відео.

Патерн у бенчмарках постачальників і незалежних оцінках послідовний: інструменти детекції добре працюють проти тих генераторів, на яких їх тренували, і погано проти всього іншого. Розрив закривається повільно, бо тренувальні дані для найновіших генераторів завжди обмежені.

Чому процесна верифікація досі виграє

Section titled “Чому процесна верифікація досі виграє”Кожна команда безпеки, що розбирала діпфейк-інцидент, доходить одного висновку: детекція — другорядний захист. Первинний захист процедурний.

Зворотний дзвінок на відомий номер перемагає діпфейк незалежно від його якості. Заздалегідь узгоджене кодове слово на іншому каналі не клонується. Позаконтекстне запитання про внутрішню непублічну інформацію спіткає імітатора, який працює з публічних досліджень.

Ці контролі не потребують ШІ. Вони не деградують, коли покращуються генератори. Вони працюють на споживчих Zoom-дзвінках, де не встановлений жоден плагін детекції. Вони також природно поширюються на вішинг-дзвінки, BEC-листи та ШІ-фішинг, які атакувальники дедалі частіше поєднують з діпфейками в багатоканальних кампаніях.

Для механіки атаки та причин, чому діпфейки успішні поза технічним викликом, див. наш посібник з діпфейк-соціальної інженерії.

Коли інвестиції в детекцію виправдані?

Section titled “Коли інвестиції в детекцію виправдані?”Real-time детекція — розумний шар для трьох конкретних сценаріїв.

Кол-центри з великими обсягами верифікації. Банки, страхові компанії та служби верифікації особи опрацьовують тисячі дзвінків на день. Автоматична детекція ловить суттєву частку ширвжиткового шахрайства до того, як воно потрапить до людей-агентів.

Медіа та редакційні операції. Новинні організації, що верифікують надіслане відео, отримують користь від форензичної детекції на попередньо записаному контенті, яка матеріально точніша за детекцію в живих дзвінках.

Програми захисту керівників. Організації з відомими високоцінними цілями можуть виправдати вартість шару детекції на відеодзвінках за участю цих осіб. Детекція не замінює процедурну верифікацію; вона її доповнює.

Для більшості організацій ROI від платформи детекції за 50 000–200 000 доларів на рік не переважає ROI від навчання фінансистів, HR та помічників керівників виконувати зворотний дзвінок щоразу. Детекцію складно масштабувати до поведінки. Поведінку дешево масштабувати до політики.

Що замість цього мають робити організації?

Section titled “Що замість цього мають робити організації?”Чотири дії мають значення більше, ніж купівля плагіна детекції.

-

Впишіть зворотну верифікацію у кожен фінансовий і повʼязаний з обліковими даними процес. Без винятків, навіть коли запит виглядає рутинним.

-

Видайте ротаційні кодові слова керівникам та їхнім помічникам. Оновлюйте щотижня й розповсюджуйте каналом, відмінним від тих, за якими верифікуєте.

-

Навчіть співробітників очікувати діпфейків у живих дзвінках. Показуйте переконливі приклади, щоб базове припущення стало: обличчя й голос не автентифікують мовця.

-

Побудуйте шлях для звітування з низькою тертям. Співробітники, які відчули, що дзвінок «якось не так», але не можуть сформулювати чому, повинні мати клік для повідомлення на безпекове ревʼю.

Інтерактивна практика тут важливіша за будь-яку презентацію. Наша вправа Whaling with a Deepfake ставить співробітників у реалістичний сценарій діпфейк-відеодзвінка, змодельований на кейсі Arup, щоб рефлекс верифікації відпрацювався до того, як приземлиться реальна атака.

Чесна відповідь

Section titled “Чесна відповідь”Чи здатний ШІ виявляти діпфейк-відеодзвінки у реальному часі? Деяку частину, у деякий час, якщо ви купите правильну корпоративну платформу, а атакувальник використає генератор, який ваша платформа вже бачила.

Це не стратегія. Організації, які у 2026 році добре керують ризиком діпфейків, ставляться до детекції як до корисного доповнення, а до процедурної верифікації — як до фактичного захисту. Атакувальники завжди матимуть доступ до генераторів, яких класифікатори ще не бачили. Вони не завжди матимуть доступ до ваших заздалегідь узгоджених кодових слів.

Навчіть свою команду верифікувати особу, коли обличчям і голосам не можна довіряти. Спробуйте нашу безкоштовну вправу Whaling with a Deepfake на основі шахрайства Arup на 25 мільйонів доларів, відпрацюйте AI-клонований голос керівника по телефону з Виявленням діпфейк-аудіо, або ознайомтеся з повним каталогом навчання з обізнаності з безпеки для більшої кількості практичних вправ.