Samsung’s semiconductor division banned ChatGPT in May 2023 after three employees leaked confidential data in under a month. One engineer pasted proprietary source code to debug an error. Another submitted internal meeting notes to generate a summary. A third uploaded chip manufacturing measurements to get yield calculations. Each person was trying to do their job faster. Each left a copy of Samsung’s trade secrets on an OpenAI server.

Within weeks, Apple, JPMorgan, Bank of America, Verizon, Amazon, Goldman Sachs, and Deutsche Bank had followed with their own restrictions. The calculus was the same at every company. The productivity gains were real, but so was the risk of employees turning consumer AI tools into a data exfiltration channel nobody had authorized.

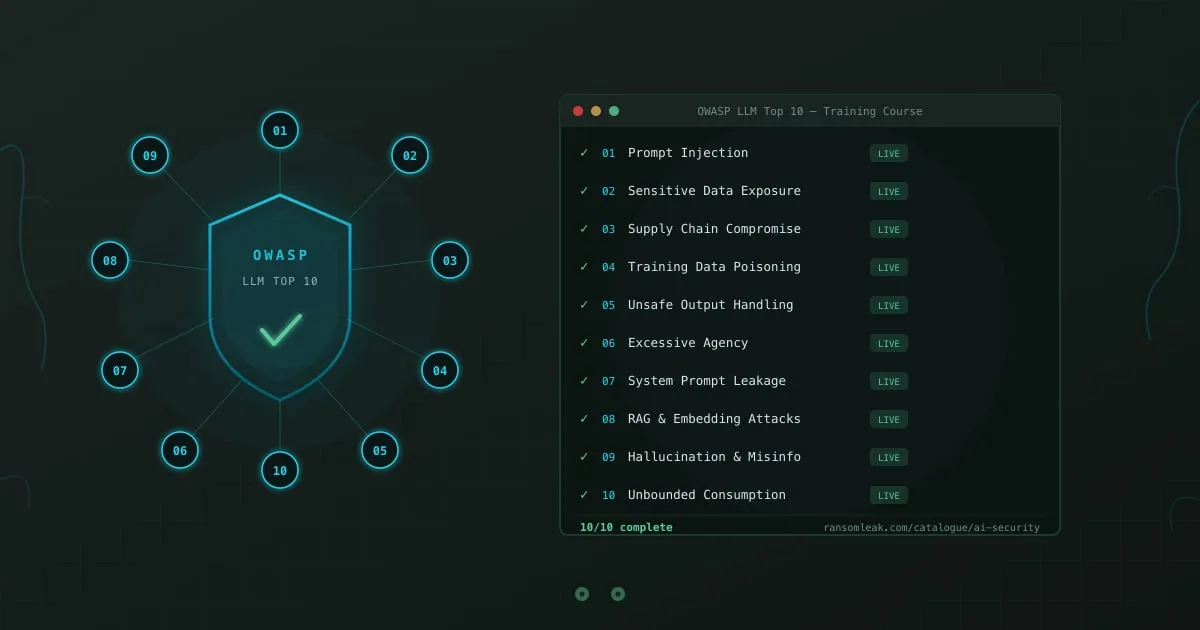

Two years later, the bans have softened into policies, and the policies have softened into training gaps. Most employees still don’t understand what happens to the text they paste into an AI chat window. This is the core of OWASP LLM02, the sensitive information disclosure risk that sits second on the OWASP Top 10 for LLM Applications.