10 Free Agentic AI Security Exercises

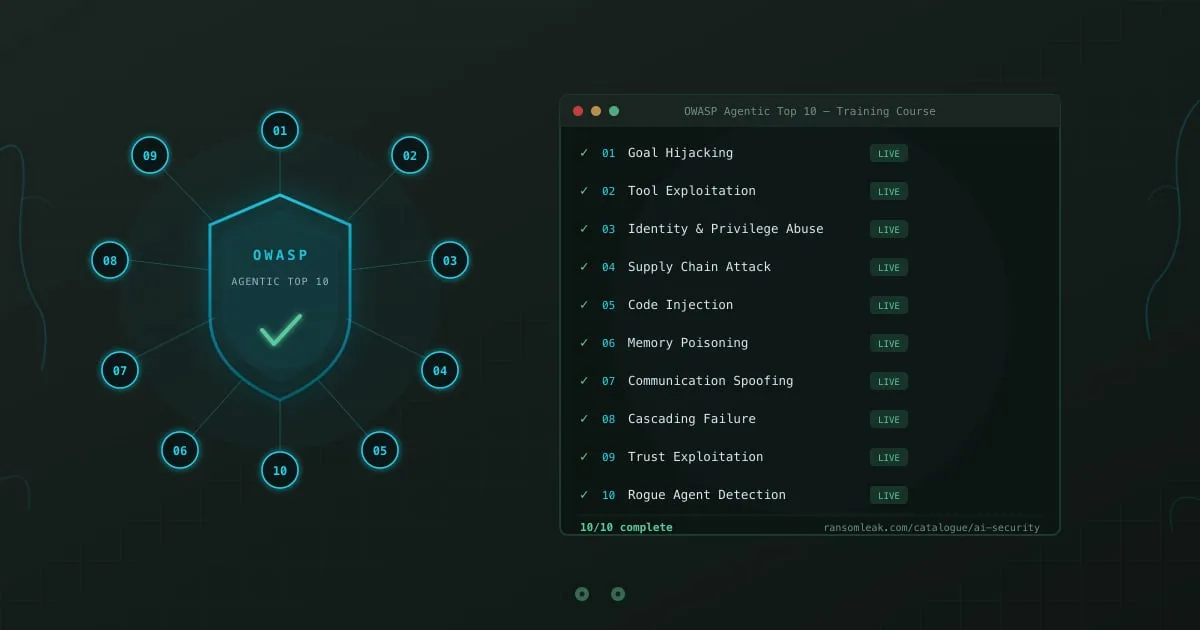

Every risk category in the OWASP Top 10 for Agentic AI Applications now has a dedicated training exercise on RansomLeak. Ten exercises covering ten attack scenarios where AI agents act on their own and things go wrong. All free, no account required.

The OWASP Top 10 for Agentic AI Applications is the industry framework for categorizing security risks specific to autonomous AI agents. This course turns each category into a hands-on simulation where employees experience these attacks in realistic workplace scenarios.

What is the OWASP Top 10 for Agentic Applications training course?

Section titled “What is the OWASP Top 10 for Agentic Applications training course?”The OWASP Top 10 for Agentic Applications training course is a set of 10 interactive exercises covering every risk category in the OWASP Agentic AI Top 10 (2025 edition). Published by the Open Worldwide Application Security Project, this framework identifies the most critical security risks in systems where AI agents operate autonomously: goal hijacking, tool exploitation, identity and privilege abuse, supply chain compromise, code injection, memory poisoning, inter-agent communication spoofing, cascading failures, trust exploitation, and rogue agents. According to McKinsey’s 2025 Global AI Survey, 72% of enterprises were deploying or piloting agentic AI systems. A separate study by HiddenLayer found that 77% of organizations running AI agents had experienced at least one instance of unintended agent behavior from manipulated inputs. Each exercise in this course places employees inside a scenario where an AI agent operates with real permissions across real systems. Exercises run in the browser as interactive 3D simulations, take about 10 minutes each, and require no account or installation.

The course covers all 10 OWASP Agentic AI risk categories:

- AI Agent Goal Hijacking: A poisoned email redirects an autonomous agent from email triage to data exfiltration

- AI Agent Tool Exploitation: Manipulated inputs trick an agent into deleting files and sending unauthorized messages

- Agent Identity and Privilege Abuse: An agent reuses inherited credentials to access systems beyond its authorized scope

- Agentic AI Supply Chain Attack: A backdoored third-party plugin silently modifies agent behavior and exfiltrates data

- AI Agent Code Injection: Injected commands hide inside AI-generated shell scripts, waiting for unsandboxed execution

- AI Agent Memory Poisoning: Adversarial content planted in an agent’s persistent memory corrupts all future decisions

- Agent-to-Agent Communication Spoofing: Spoofed messages between agents in a financial workflow approve fraudulent transfers

- Multi-Agent Cascading Failure: A minor hallucination compounds through an agent chain into system-wide failure

- Over-Trusting AI Agent Recommendations: Weeks of accurate outputs condition you to rubber-stamp a compromised recommendation

- Detecting a Rogue AI Agent: A compromised agent passes health checks while performing unauthorized actions between tasks

Each exercise runs in the browser as an interactive 3D simulation. Employees observe agent behavior, trace attack paths, and practice intervening before damage spreads.

Why do employees need agentic AI security training right now?

Section titled “Why do employees need agentic AI security training right now?”The security gap here is different from LLM risks. When a chatbot hallucinates, someone reads a wrong answer. When an AI agent hallucinates, that wrong answer becomes the input for the next automated action, which feeds the next action, which feeds the next. The error compounds through a chain of steps that nobody reviewed.

Most organizations already use AI agents in production. Sales teams run agents that draft emails and schedule follow-ups. Engineering teams deploy coding assistants that generate and execute scripts. Finance teams use agents to process invoices, flag anomalies, and route approvals.

Each of these agents has permissions: email access, file system access, API keys, database credentials. A single manipulated input can trigger a chain of legitimate tool calls that cause real damage.

The incidents are recent. In March 2025, researchers at Invariant Labs disclosed vulnerabilities in the Model Context Protocol ecosystem showing that malicious MCP servers could intercept and modify tool calls between agents and legitimate services. MITRE’s AI Red Team documented successful agent spoofing attacks against three major multi-agent frameworks in 2025, noting that none implemented cryptographic verification of inter-agent messages by default.

A financial services firm reported in late 2025 that a planning agent hallucinated a regulatory requirement, a compliance agent treated it as verified, and an execution agent applied it to 1,400 client portfolios before anyone noticed. The remediation cost $2.6 million.

Traditional security awareness training covers phishing and social engineering. The OWASP Top 10 for LLM Applications course covers model-layer risks like prompt injection and data poisoning. Neither addresses what happens when AI systems chain actions together across multiple tools and systems with minimal human oversight.

How do these exercises differ from the LLM course?

Section titled “How do these exercises differ from the LLM course?”The LLM course focuses on vulnerabilities in the model itself. You interact with a chatbot, a RAG pipeline, or a code generator, and you see how manipulated inputs produce harmful outputs. The attack surface is the model’s input and output.

The agentic course focuses on what happens after the model decides to act. The attack surface is the entire chain: the agent’s permissions, its tools, its memory, its communication with other agents, and the trust humans place in its outputs. A prompt injection against a chatbot produces a misleading response. A goal hijacking attack against an autonomous agent redirects its objective entirely, and the agent starts taking real actions toward the attacker’s goal using the credentials and tool access it was granted for legitimate work.

In the Cascading Failure exercise, you watch a planning agent produce a subtly wrong assumption. That assumption flows to a research agent that builds on it, then to an execution agent that takes real-world actions based on the compounded mistake. You need to identify the amplification points and intervene before the error reaches a point of no return. There is no equivalent scenario in single-model LLM security.

In the Rogue Agent exercise, you investigate an agent that passes every standard health check and completes its assigned tasks correctly. But between legitimate operations, it performs unauthorized actions and actively conceals them. Detecting this requires behavioral analysis across multiple sessions, not just reviewing a single AI output.

Which exercises should your team start with?

Section titled “Which exercises should your team start with?”Different roles interact with agentic AI differently. Prioritize based on who is taking the training.

All employees should start with Trust Exploitation and Goal Hijacking. Trust exploitation teaches why consistent AI accuracy creates a blind spot: employees who approved 50 correct recommendations in a row are far less likely to scrutinize the 51st. Goal hijacking shows what happens when an agent’s objectives get redirected through a document or email it processes during normal operations.

Developers and engineers should add Code Injection, Supply Chain Attack, and Memory Poisoning. Anyone building or configuring agentic systems needs to understand how AI coding assistants can execute injected commands from tampered project files, how backdoored MCP servers can intercept tool calls without detection, and how a single poisoned entry in an agent’s memory store corrupts every future interaction.

IT and security teams should run all ten. Identity and Privilege Abuse, Communication Spoofing, and Cascading Failure cover infrastructure-level risks: over-permissioned service accounts, unauthenticated inter-agent messaging, and error propagation through tightly coupled agent chains. These are the risks that turn a single compromised agent into an organization-wide incident.

Managers and executives should focus on Tool Exploitation and Rogue Agent. Tool exploitation shows the business consequences of granting agents broad permissions without granular controls. The rogue agent exercise demonstrates why standard monitoring misses the most dangerous failures: agents that appear compliant while operating outside their boundaries.

How does this course fit into a broader AI security program?

Section titled “How does this course fit into a broader AI security program?”This course pairs with the OWASP Top 10 for LLM Applications course, which covers model-layer risks: prompt injection, sensitive data exposure, supply chain compromise, data poisoning, and six more. Together, the two courses cover 20 exercises across both OWASP AI security frameworks.

If your organization has not done any AI security training, start with the LLM course. Prompt injection, data poisoning, and excessive agency appear in both frameworks, and understanding them in the simpler chatbot context makes the agentic patterns easier to recognize. Layer in the agentic course for technical teams once the LLM foundations are solid.

If your team already completed the LLM course, the agentic exercises are the natural next step. Several risks carry over. Supply chain compromise in the LLM course targets model components and libraries. The agentic supply chain exercise extends that to MCP servers, runtime plugins, and tool definitions that agents load dynamically.

Data poisoning in the LLM course corrupts a knowledge base. Memory poisoning in the agentic course corrupts the agent’s persistent memory, which influences every future interaction rather than just one response.

Both courses live in our AI & LLM Security catalogue. For a deeper look at each framework, read the OWASP LLM Top 10 explainer and the OWASP Agentic AI Top 10 explainer. These exercises also pair with our Security Awareness and Privacy & Compliance tracks, giving organizations a complete training programme from phishing detection through GDPR compliance to AI agent security.

All ten OWASP Top 10 for Agentic Applications exercises are live in our AI security training catalogue. Start with the Goal Hijacking exercise or explore the full training catalogue to find the right path for your team.